为什么最有价值的 CAD 模型将是最可解释的|Zixel 洞察

团队 often talk about "good models" but don't fully explain what gives a CAD model long-term value。A model becomes valuable when people can understand it—not just the person who built it, but every engineer who touches it years later, every supplier who must interpret its behavior, and every AI tool that tries to reason about its structure。

不能被理解的模型不能被安全更改

Most of the cost in engineering comes from rework, not from initial design。Rework happens when a team member modifies something without understanding the consequences。An interpretable model reveals its logic at a glance—the feature order makes sense, the reasoning behind constraints is visible, and the relationships reflect how the system is supposed to behave。

可解释性比文档更好地保护意图

Intent is surprisingly fragile—it can disappear when a designer leaves, when documentation goes stale, or when a model evolves without explanation。Interpretability anchors intent inside the model itself, making intent travel with the design rather than disappearing after handoff.

AI 工具在模型可解释时更有效

If constraint naming is ambiguous, AI must guess。Interpretable models help AI reason correctly—when the system understands the intent behind parameters, it can provide guidance that aligns with human thinking。

Zixel 洞察

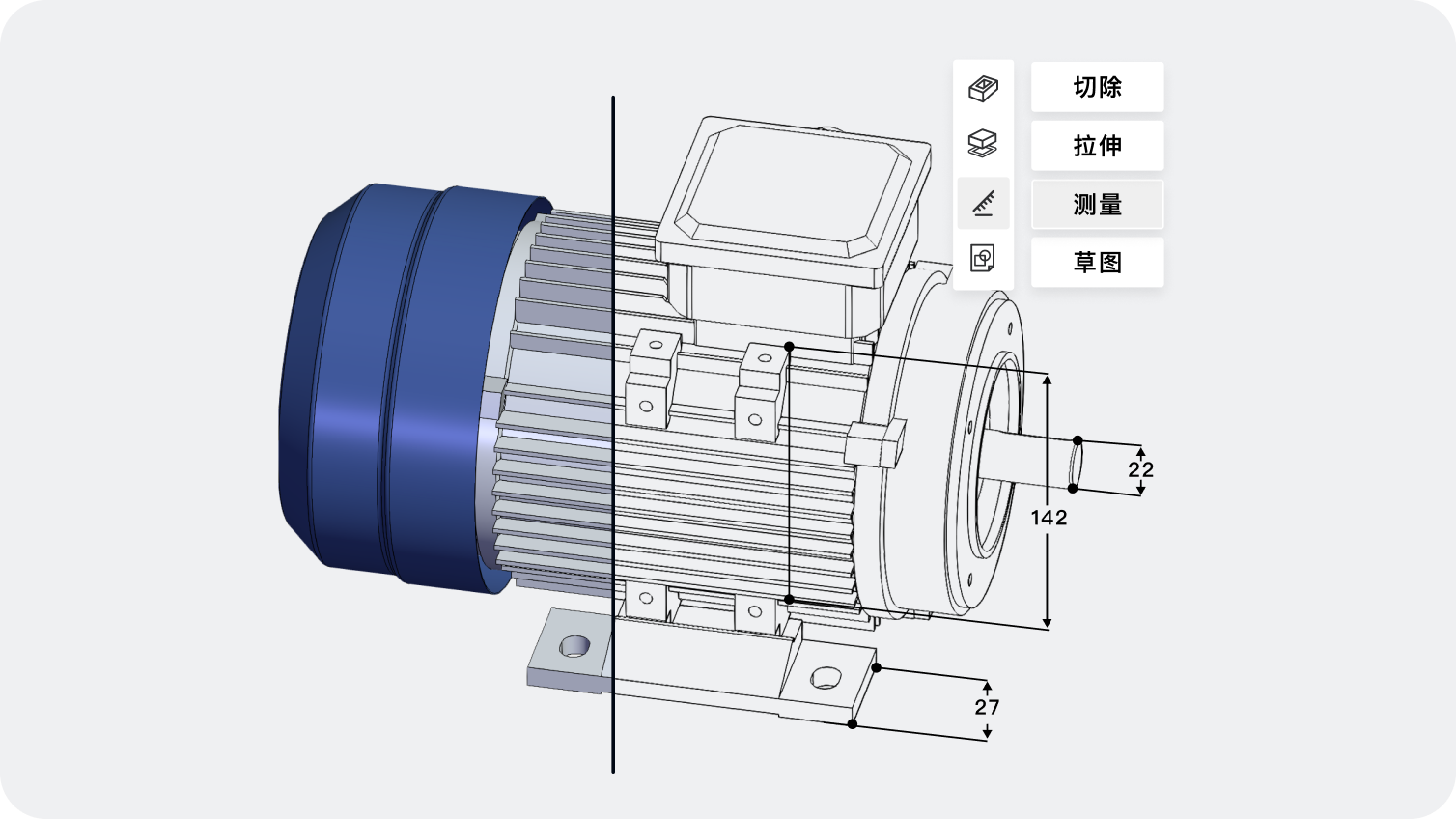

在 Zixel,我们视可解释性为下一个 CAD 价值时代的定义。Geometry alone cannot support collaboration or intelligent automation。A model becomes powerful when it reveals its logic and communicates its intent across teams。

版权声明:

- 凡本网站注明“来源子虔科技”或者“来源ZIXEL”的所有作品,均为本网站合法拥有版权的作品,未经本网站授权,任何媒体、网站、个人不得转载、链接、转帖或以其他方式使用。

- 经本网站合法授权的,应在授权范围内使用,且使用时必须注明“来源子虔科技”或者“来源ZIXEL”,并且不得对作品中出现的“子虔科技” “ZIXEL”字样进行删减、替换等。违反上述声明者,本网站将依法追究其法律责任。

- 本网站的部分资料转载自互联网,均尽力标明作者和出处。本网站转载的目的在于传递更多信息,并不意味着赞同其观点或证实其描述,本网站不对其真实性负责。

- 如您认为本网站刊载作品涉及版权等问题,请与本网站联系(邮箱:support@zixel.cn,电话:189 1853 8109),本网站核实确认后会尽快予以处理。

推荐阅读

当制造反馈循环重写早期建模过程时

传统上,设计从理想几何开始,然后接受制造评审。当反馈在建模时实时到达时,设计师从一开始就将可制造性编码进结构。这种变化将返工从设计周期的结尾转移到设计的最早阶段,从根本上重新定义了设计的起点。

## 传统设计流程的线性假设

传统产品开发流程建立在一个线性假设之上:设计...

2026-04-14 01:00

当现场性能影响下一代建模工具时

## 概述

当现场性能数据开始流入设计系统时,工程设计进入了一个反馈闭环。传感器数据、维护记录、保修信息都开始塑造设计决策的方向。CAD不再只反映工程师的想法,还反映产品在实际使用中的表现。这从根本上改变了"好设计"的定义标准。

## 从虚拟到真实 传统CAD系统是虚拟的——它们...

2026-04-14 01:00

为什么明天团队的沟通将完全不依赖文件

## 概述

文件是工业时代的产物——它们捕获快照,携带几何,但不携带意图、推理或决策背景。当团队在共享环境中工作时,沟通围绕模型展开而非关于模型——对话更加精准,因为上下文始终存在于共享空间。文件作为协作媒介的角色正在走向终结。

## 文件的根本局限

文件有几个根本性的局限,这些...

2026-04-14 01:00

为什么下一代CAD在设计上就将可解释

## 概述

当人工智能开始参与设计决策时,一个根本性的问题浮现出来:为什么AI做了这个建议?设计师需要理解AI如何做出决策,团队需要看到设计推理而非仅看到几何。不可解释的系统迟早会被放弃——能够展示推理过程的CAD才能建立真正的信任,让AI与设计师成为真正的协作者。

## AI黑...

2026-04-14 01:00

为什么流程感知CAD将取代纯几何思维

## 概述

传统CAD擅长描述形状,但不擅长捕获形状如何被生产、检验或维护。这种局限性导致了设计和制造之间的鸿沟。当CAD反映真实的制造和流程约束时,工程师不再只问"模型看起来对吗",而是问"这个设计在实际流程中是否可行"。流程感知CAD代表了超越纯几何思维的一次飞跃。

## 纯...

2026-04-14 01:00

ZIXEL 云原生 3D CAD 产品设计协作平台

)

- 在线编辑 多人协作

- 复杂设计 流畅运行

- 50+ 主流格式兼容

- AI创成式 智能建模

推荐

最新

ZIXEL专属顾问服务

扫码添加顾问微信

获取企业专属技术支持

1V1快速响应

1V1快速响应