为什么 AI 将迫使 CAD 平台变得更加可解释 | Zixel 观点

引言

工程团队已经学会忍受 CAD 中 opaque(不透明)的部分。设计师习惯于 behave unpredictably(行为不可预测)的特征或 fail without much explanation(失败而没有太多解释)的约束。人们谈论重建错误,就像谈论 weather patterns(天气模式)一样。这些 frustrations(挫败感)被视为工作的一部分。但一旦 AI 进入建模环境,这种 tolerance(容忍度)开始消退。AI 不能在 black boxes(黑箱)中操作。它需要 clarity(清晰度)。它需要它可以读取的 structure(结构)。它需要解释自己的模型。As soon as AI becomes a partner in design work(一旦 AI 成为设计工作中的伙伴),CAD 平台面临一个新的要求:它们必须变得可解释(interpretable)。

当 AI 学习的系统是谜时,它无法提供帮助

大多数 legacy(遗留)CAD 系统隐式地存储 intent(意图)。一个特征 works because of the order in which it was created(因为创建顺序而工作)。一个约束 behaves a certain way because of a historical reference(因为历史参考而以某种方式行为)。这些 relationships matter(关系很重要),but they are not easy to observe(但它们不容易观察)。工程师依赖 experience(经验)来理解它们。AI 没有那种奢侈。它需要 explicit information(明确的信息)。如果模型将 structure hides behind complex chains of dependencies(结构隐藏在复杂的依赖链后面),AI cannot draw meaningful conclusions or provide reliable suggestions(无法得出有意义的结论或提供可靠的建议)。 Interpretability becomes necessary because AI's usefulness depends on it(可解释性变得必要,因为 AI 的有用性取决于它)。没有 transparency(透明度),AI cannot explain recommendations、assess risks 或 predict how the model will react to change(无法解释建议、评估风险或预测模型将如何响应变更)。

工程师需要知道为什么 AI 建议某些东西

即使 AI 产生了准确的建议,工程师不会 trust it unless they understand the reasoning(信任它,除非他们理解推理)。他们需要知道为什么某个约束 considered fragile(被认为是脆弱的)或为什么 feature order increases long-term risk(特征顺序增加长期风险)。如果 AI cannot explain its logic(无法解释其逻辑),designer is left with a guess(设计师只剩下猜测),and guesses are not enough for critical decisions(猜测对于关键决策是不够的)。 Interpretability builds trust(可解释性建立信任)。当 AI 突出 structural issue 并显示背后的 pattern 时,engineers can judge the insight on its merits(工程师可以根据其价值判断洞察)。对话从"Do I trust the output?"转移到"Does this reasoning align with my understanding of the model?"(对话从"我信任输出吗?"转移到"这个推理与我对模型的理解一致吗?")。

当模型可以解释自己时,它们更容易维护

团队 often inherit models that feel dense and fragile(经常继承感觉 dense and fragile(密集和脆弱)的模型)。People avoid touching certain features simply because they do not understand the structure behind them(人们避免触摸某些特征,simply because they don't understand the structure behind them(仅仅因为他们不理解背后的结构))。If models become interpretable(如果模型变得可解释),this dynamic begins to change(这种动态开始改变)。当工具 shows how a change propagates or which constraints hold the model together(显示变更如何传播或哪些约束将模型 hold together)时,designers can refactor with more confidence(设计师可以更自信地重构)。 AI accelerates this because it expects models to present clear logic(AI 加速了这一点,因为它期望模型呈现清晰的逻辑)。The pressure to support AI turns into a benefit for humans(支持 AI 的压力转化为对人类的好处)。Interpretability makes the model easier for everyone to navigate(可解释性使模型对每个人更容易导航)。

可解释性帮助组织 preserve their design knowledge(保存其设计知识)

工程文化 often carried in the tacit knowledge of senior designers(通常承载在资深设计师的隐性知识中)。When those designers leave or shift projects(当那些设计师离开或转换项目),a part of the organization's insight disappears with them(组织的部分洞察随他们消失)。Interpretable CAD helps preserve this reasoning(可解释的 CAD 帮助保存这种推理)。When intent and structure can be read directly from the model(当意图和结构可以直接从模型中读取时),knowledge does not dissolve over time(知识不会随时间消散)。 AI supports this by surfacing recurring patterns and highlighting the rationale embedded in older models(AI 通过呈现反复出现的模式和突出嵌入在较旧模型中的 rationale 来支持这一点)。It becomes easier to understand why a design evolved the way it did(更容易理解设计为什么以那种方式演变)。Interpretability becomes an investment in organizational memory(可解释性成为组织记忆的投资)。

AI 推动 CAD 走向更 rigorous internal language(更严格的内部语言)

Once AI needs to read and explain models(一旦 AI 需要读取和解释模型),CAD platforms must adopt clearer internal representations(CAD 平台必须采用更清晰的内部表示)。Relationships that were once handled implicitly must become explicit(曾经隐式处理的关系必须变得明确)。Hidden dependencies must become visible(隐藏的依赖必须变得可见)。Ambiguous behaviors must be reduced(模糊的行为必须减少)。 This shift is not about making tools more restrictive(这种转变不是关于让工具更具限制性)。It is about making their structure easier for both humans and machines to reason about(它是关于让它们的结构对人类和机器都更容易推理)。A model that explains itself becomes more resilient、more reusable and easier to adapt as requirements shift(一个解释自己的模型变得更有弹性、更可重用,并且在需求变化时更容易适应)。

Zixel 观点

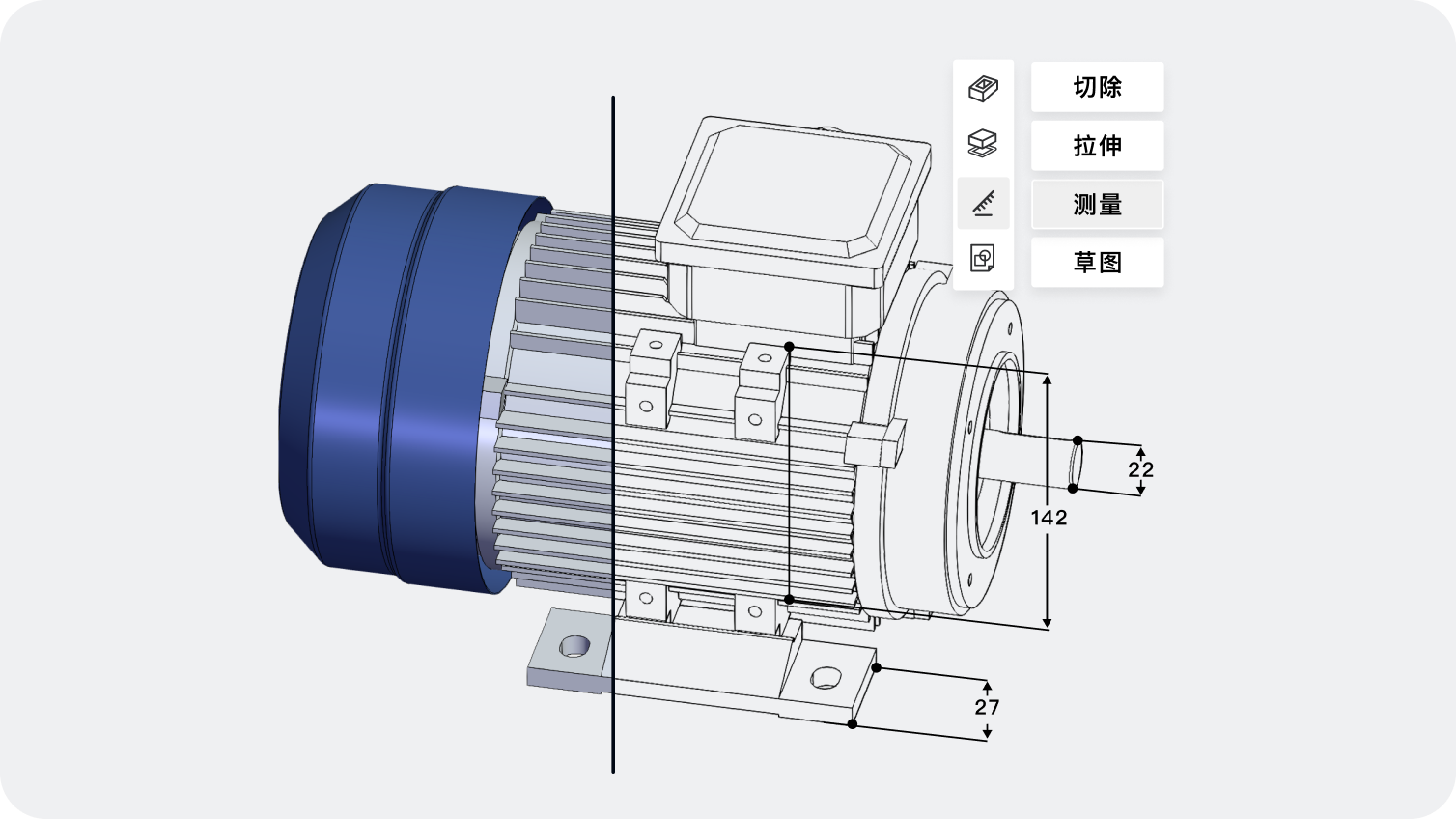

在 Zixel,我们相信可解释性将定义 CAD 的下一个时代。我们的云端原生平台围绕 clarity(清晰度)设计——clear history、clear structure、clear relationships across features(清晰的版本历史、清晰的结构、跨特征的清晰关系)。Zixel 内的 AI 工具依赖这种透明度来帮助 designers understand intent、evaluate risks and make confident decisions(设计师理解意图、评估风险和做出自信决策)。我们不将可解释性视为约束。我们将其视为 better engineering(更好工程)的基础。When models can speak for themselves(当模型可以为自己发声),both humans and AI become more effective partners(人类和 AI 都成为更有效的伙伴)。

为什么可解释性将成为 CAD 的核心要求

当 AI 更深地集成到工程工作流程中时,clarity moves from a nice-to-have to an essential part of the toolchain(清晰度从锦上添花变成工具链的核心部分)。 CAD 的未来取决于能解释自己行为的系统,giving teams the insight they need to design with confidence and intelligence(给团队他们需要以信心和智能进行设计的洞察)。

版权声明:

- 凡本网站注明“来源子虔科技”或者“来源ZIXEL”的所有作品,均为本网站合法拥有版权的作品,未经本网站授权,任何媒体、网站、个人不得转载、链接、转帖或以其他方式使用。

- 经本网站合法授权的,应在授权范围内使用,且使用时必须注明“来源子虔科技”或者“来源ZIXEL”,并且不得对作品中出现的“子虔科技” “ZIXEL”字样进行删减、替换等。违反上述声明者,本网站将依法追究其法律责任。

- 本网站的部分资料转载自互联网,均尽力标明作者和出处。本网站转载的目的在于传递更多信息,并不意味着赞同其观点或证实其描述,本网站不对其真实性负责。

- 如您认为本网站刊载作品涉及版权等问题,请与本网站联系(邮箱:support@zixel.cn,电话:189 1853 8109),本网站核实确认后会尽快予以处理。

推荐阅读

当制造反馈循环重写早期建模过程时

传统上,设计从理想几何开始,然后接受制造评审。当反馈在建模时实时到达时,设计师从一开始就将可制造性编码进结构。这种变化将返工从设计周期的结尾转移到设计的最早阶段,从根本上重新定义了设计的起点。

## 传统设计流程的线性假设

传统产品开发流程建立在一个线性假设之上:设计...

2026-04-14 01:00

当现场性能影响下一代建模工具时

## 概述

当现场性能数据开始流入设计系统时,工程设计进入了一个反馈闭环。传感器数据、维护记录、保修信息都开始塑造设计决策的方向。CAD不再只反映工程师的想法,还反映产品在实际使用中的表现。这从根本上改变了"好设计"的定义标准。

## 从虚拟到真实 传统CAD系统是虚拟的——它们...

2026-04-14 01:00

为什么明天团队的沟通将完全不依赖文件

## 概述

文件是工业时代的产物——它们捕获快照,携带几何,但不携带意图、推理或决策背景。当团队在共享环境中工作时,沟通围绕模型展开而非关于模型——对话更加精准,因为上下文始终存在于共享空间。文件作为协作媒介的角色正在走向终结。

## 文件的根本局限

文件有几个根本性的局限,这些...

2026-04-14 01:00

为什么下一代CAD在设计上就将可解释

## 概述

当人工智能开始参与设计决策时,一个根本性的问题浮现出来:为什么AI做了这个建议?设计师需要理解AI如何做出决策,团队需要看到设计推理而非仅看到几何。不可解释的系统迟早会被放弃——能够展示推理过程的CAD才能建立真正的信任,让AI与设计师成为真正的协作者。

## AI黑...

2026-04-14 01:00

为什么流程感知CAD将取代纯几何思维

## 概述

传统CAD擅长描述形状,但不擅长捕获形状如何被生产、检验或维护。这种局限性导致了设计和制造之间的鸿沟。当CAD反映真实的制造和流程约束时,工程师不再只问"模型看起来对吗",而是问"这个设计在实际流程中是否可行"。流程感知CAD代表了超越纯几何思维的一次飞跃。

## 纯...

2026-04-14 01:00

ZIXEL 云原生 3D CAD 产品设计协作平台

)

- 在线编辑 多人协作

- 复杂设计 流畅运行

- 50+ 主流格式兼容

- AI创成式 智能建模

本篇目录

推荐

最新

ZIXEL专属顾问服务

扫码添加顾问微信

获取企业专属技术支持

1V1快速响应

1V1快速响应